Differences

This shows you the differences between two versions of the page.

| Both sides previous revisionPrevious revisionNext revision | Previous revisionNext revisionBoth sides next revision | ||

| jobs [2016/03/03 07:52] – [Theses and Jobs] ahaidu | jobs [2019/05/08 09:34] – [Theses and Student Jobs] haidu | ||

|---|---|---|---|

| Line 1: | Line 1: | ||

| ~~NOTOC~~ | ~~NOTOC~~ | ||

| - | =====Theses and Jobs===== | + | |

| + | =====Open researcher positions===== | ||

| + | |||

| + | =====Theses and Student | ||

| If you are looking for a bachelor/ | If you are looking for a bachelor/ | ||

| + | |||

| + | |||

| + | == Knowledge-enabled PID Controller for 3D Hand Movements in Virtual Environments (BA/MA Thesis) == | ||

| + | |||

| + | Implementing a force-, velocity- and impulse-based PID controller for precise and responsive hand movements in a virtual environment. The virtual environment used in Unreal Engine in combination with Virtual Reality devices. The movements | ||

| + | of the human user will be mapped to the virtual hands, and controlled via the implemented PID controllers. | ||

| + | The controller should be able to dynamically tune itself depending on the executed actions (opening/ | ||

| + | |||

| + | Requirements: | ||

| + | * Good C++ programming skills | ||

| + | * Familiar with PID controllers and control theory | ||

| + | * Experience with simulators/ | ||

| + | * Experience with Unreal Engine | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| + | |||

| + | Contact: [[team: | ||

| + | |||

| + | |||

| + | < | ||

| == Lisp / CRAM support assistant (HiWi) == | == Lisp / CRAM support assistant (HiWi) == | ||

| Technical support for the group for Lisp and the CRAM framework. \\ | Technical support for the group for Lisp and the CRAM framework. \\ | ||

| - | 5 hours per week for up to 1 year (paid). | + | 8+ hours per week for up to 1 year (paid). |

| Requirements: | Requirements: | ||

| Line 15: | Line 38: | ||

| Contact: [[team: | Contact: [[team: | ||

| + | --></ | ||

| + | == Mesh Editing / Mesh Segmentation/ | ||

| + | {{ : | ||

| + | | ||

| - | == Integrating PR2 in the Unreal Game Engine Framework | + | Requirements: |

| - | {{ : | + | * Good knowledge |

| + | * Familiar with Blender / Maya (or other) | ||

| - | Integrating the [[https:// | + | Contact: |

| + | |||

| + | |||

| + | < | ||

| + | == 3D Model / Material | ||

| + | {{ : | ||

| + | |||

| + | Developing and improving existing 3D models | ||

| + | |||

| + | Bonus: Working with state of the art 3D Scanners | ||

| Requirements: | Requirements: | ||

| - | * Good programming skills in C/C++ | + | * Experience with Blender |

| - | * Basic physics/rendering engine knowledge | + | * Knowledge of Unreal Engine material |

| - | * Basic ROS knowledge | + | * Familiar with version-control systems (git) |

| - | * UE4 basic tutorials | + | * Able to work independently with minimal supervision |

| + | |||

| Contact: [[team: | Contact: [[team: | ||

| + | --></ | ||

| - | == Kitchen Activity Games in a Realistic Robotic Simulator | + | < |

| - | {{ :research:gz_env1.png?200|}} | + | == Integrating PR2 in the Unreal Game Engine Framework |

| + | {{ :research:unreal_ros_pr2.png?100|}} | ||

| - | Developing new activities and improving the current simulation framework done under the [[http://gazebosim.org/|Gazebo]] robotic simulator. Creating a custom GUI for the game, in order to launch new scenarios, save logs etc. | + | Integrating |

| Requirements: | Requirements: | ||

| * Good programming skills in C/C++ | * Good programming skills in C/C++ | ||

| * Basic physics/ | * Basic physics/ | ||

| - | * Gazebo simulator | + | * Basic ROS knowledge |

| + | * UE4 basic tutorials | ||

| Contact: [[team: | Contact: [[team: | ||

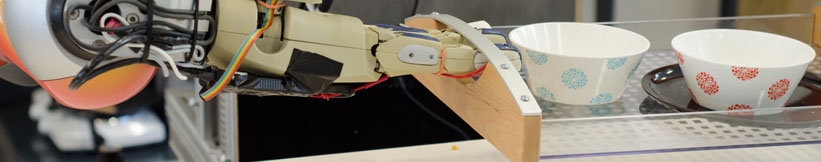

| + | == Realistic Grasping using Unreal Engine (BA/ | ||

| + | {{ : | ||

| - | == Automated sensor calibration toolkit (BA/MA)== | + | The objective of the project is to implement var- |

| + | ious human-like grasping approaches in a game developed using [[https:// | ||

| - | Computer vision is an important part of autonomous robots. For robots the image sensors are the main source of information of the surrounding world. Each camera is different, even if they are from the same production line. For computer vision, especially for robots manipulating their environment, | + | The game consist |

| - | + | ||

| - | The topic for this thesis | + | |

| - | + | ||

| - | {{ : | + | |

| - | The system should: | + | |

| - | * be independent of the camera type | + | |

| - | * estimate intrinsic and extrinsic parameters | + | |

| - | * calibrate depth images (case of RGB-D) | + | |

| - | * integrate capabilities from Halcon [1] | + | |

| - | * operate autonomously | + | |

| + | In order to improve the ease of manipulating objects the user should | ||

| + | be able to switch during runtime the type of grasp (pinch, power | ||

| + | grasp, precision grip etc.) he/she would like to use. | ||

| + | | ||

| Requirements: | Requirements: | ||

| - | * Good programming skills in Python and C/C++ | + | * Good programming skills in C++ |

| - | * ROS, OpenCV | + | * Good knowledge of the Unreal Engine API. |

| + | * Experience with skeletal control / animations / 3D models in Unreal Engine. | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| - | [1] http:// | ||

| - | Contact: [[team: | + | Contact: [[team/ |

| + | --></ | ||

| - | == On-the-fly 3D CAD model creation | + | < |

| + | == Unreal Engine Editor Developer | ||

| + | {{ : | ||

| - | Create models during runtime | + | Creating new user interfaces (panel customization) |

| - | Requirements: | ||

| - | * Good programming skills in C/C++ | ||

| - | * strong background in computer vision | ||

| - | * ROS, OpenCV, PCL | ||

| - | Contact: [[team:thiemo_wiedemeyer|Thiemo Wiedemeyer]] | + | Requirements: |

| + | * Good C++ programming skills | ||

| + | * Familiar with the [[https:// | ||

| + | * Familiar with Unreal Engine API | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| - | == Simulation of a robots belief state to support perception(MA) == | + | Contact: [[team: |

| - | Create a simulation environment that represents the robots current belief state and can be updated frequently. Use off-screen rendering to investigate the affordances these objects possess, in order to support segmentation, | ||

| - | Requirements: | ||

| - | * Good programming skills in C/C++ | ||

| - | * strong background in computer vision | ||

| - | * Gazebo, OpenCV, PCL | ||

| - | Contact: [[team: | + | == OpenEASE rendering in Unreal Engine (BA/MA Thesis, Student Job / HiWi)== |

| - | == Multi-expert segmentation of cluttered and occluded scenes == | ||

| - | Objects in a human environment are usually found in challenging scenes. They can be stacked upon eachother, touching or occluding, can be found in drawers, cupboards, refrigerators and so on. A personal robot assistant in order to execute a task, needs to detect these objects and recognize them. In this thesis a multi-modal approach to interpreting cluttered scenes is going to be investigated, | + | Implmenting |

| - | + | ||

| - | Requirements: | + | |

| - | * Good programming skills in C/C++ | + | |

| - | * strong background in 3D vision | + | |

| - | * basic knowledge of ROS, OpenCV, PCL | + | |

| - | + | ||

| - | Contact: | + | |

| + | Requirements: | ||

| + | * Good C++ programming skills | ||

| + | * Familiar with Unreal Engine API | ||

| + | * Familiar with HTML5 and JavaScript | ||

| + | * Familiar with the [[https:// | ||

| + | * Familiar with basic ROS communication | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| + | Contact: [[team: | ||

| + | --></ | ||

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: