Differences

This shows you the differences between two versions of the page.

| Both sides previous revisionPrevious revisionNext revision | Previous revision | ||

| teaching:gsoc2015 [2015/03/19 07:24] – [Google Summer of Code 2015] winkler | teaching:gsoc2015 [2015/03/19 14:49] (current) – [Google Summer of Code 2015] gkazhoya | ||

|---|---|---|---|

| Line 13: | Line 13: | ||

| If you are interested in working on a topic and meet its general criteria, you should have a look at the [[teaching: | If you are interested in working on a topic and meet its general criteria, you should have a look at the [[teaching: | ||

| - | For a PDF-version of the ideas page, and a brief introduction of our research group, please see {{:teaching:gsoc: | + | For a PDF-version of the ideas page, and a brief introduction of our research group, please see {{: |

| Line 108: | Line 108: | ||

| Contact: [[team/ | Contact: [[team/ | ||

| + | |||

| + | |||

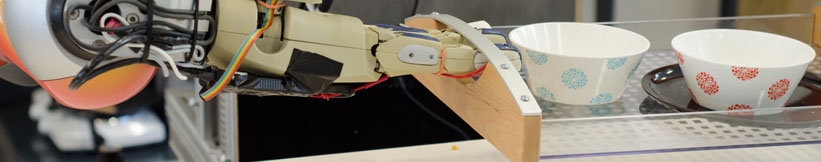

| + | ==== Topic 3: CRAM -- Symbolic Reasoning Tools with Bullet ==== | ||

| + | |||

| + | {{ : | ||

| + | |||

| + | **Main Objective: | ||

| + | |||

| + | {{ : | ||

| + | |||

| + | Possible sub-projects: | ||

| + | |||

| + | * assuring the physical consistency of the belief state by utilizing the integrated Bullet physics engine, i.e. correcting explicitly wrong data coming from sensors to closest logically sound values; | ||

| + | * improving the representation of previously unseen objects in the belief state by, e.g. interpolating the point cloud into a valid 3D mesh | ||

| + | {{ : | ||

| + | * improving the visualization of the belief state and the intentions of the robot in RViz (such as, e.g., highlighting the next object to be manipulated, | ||

| + | * update the internal world state to reflect the changes in the environment such as “a drawer has been opened” (up to storing the precise angle the door is at after opening), and emitting corresponding semantically meaningful events, e.g. “object-fell-down” event is to be emitted when the z coordinate of the object drastically changes | ||

| + | * many other ideas that we can discuss based on applicants’ individual interests and abilities. | ||

| + | |||

| + | **Task Difficulty: | ||

| + | |||

| + | **Requirements: | ||

| + | |||

| + | **Expected Results:** We expect operational and robust contributions to the source code of the existing robot control system including minimal documentation and test coverage. | ||

| + | |||

| + | Contact: [[team/ | ||

| + | |||

| ==== Topic 4: Multi-modal Big Data Analysis for Robotic Everyday Manipulation Activities ==== | ==== Topic 4: Multi-modal Big Data Analysis for Robotic Everyday Manipulation Activities ==== | ||

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: