Table of Contents

SUTURO RoboCup@Home 2023

The HSR at storing groceries. This video is accelerated to 600% speed. This results with a real-time factor of approximately 0.22 to an effective speed of 132%.

Welcome to the SUTURO@Home project! This project is organized through the CRC 1320 Everyday Activity Science and Engineering (EASE 🔗). EASE is an interdisciplinary research center at the University of Bremen that investigates everyday activity science & engineering. Its core purpose is to advance the understanding of how human-scale manipulation tasks can be mastered by robotic agents. Within EASE, general software frameworks for robotic agents are created and further developped. These frameworks (CRAM, KNOWROB, GISKARD, and ROBOKUDO) can also be applied to the RoboCup@Home context. There is further a substantial overlap in robot capabilities required to pass the RoboCup@Home challenges with what capabilities robots within EASE provide. SUTURO students will benefit from expertise acquired by EASE researchers through multiple years of experience in working with autonomous robots performing everyday activities. We further expect to make the EASE software infrastructure more robust and flexible through the application in the RoboCup@Home domain.The HSR at cleaning up. This video is accelerated to 600% speed. This results with a real-time factor of approximately 0.22 to an effective speed of 132%.

Motivation and Goal

RoboCup@Home wants to provide a stage for service robots and the problems these robots can tackle, like interacting with humans and helping them in their everyday-life. In the competition, the participants have to absolve different challenges in two stages. We chose to go for the following challenges:

Stage 1

- Clean up

- Storing Groceries

- Set the table

In the second challenge the robot has to recognize objects which are provided on a table and then has to sort them in a shelf. The shelf itself will already have some objects inside of it and the robot must categories them so he can correctly place the objects from the table in it. Furthermore, one door of the shelf will be closed, so the robot has to open it on its own.

The stage 2 challenges are more extensive and require good management and execution to absolve it in time. The challenge we chose requires the robot to set a table for lunch. The dishes are in a shelf as well as in a drawer which the robot needs to open. The order of the knife, spoon and fork relative to the plate is also important.

To accomplish the challenges, the robot has to be able to:

- Navigate between two points e.g. a table and a shelf,

- Recognize objects,

- Find categories for objects and sort the objects,

- Grasp the objects,

- Put the objects in the desired position,

Team

We are happy to present our whole team:

| Ana Khasia khasia[at]uni-bremen.de Informatics M.Sc. |

Malte Dörgeloh mdoergel[at]uni-bremen.de Informatics M.Sc. | Kamal Atteibi atteibi[at]uni-bremen.de Informatics M.Sc. |

Tede von Knorre tede[at]uni-bremen.de Informatics B.Sc. |

|---|

Manipulation

| Karla Brück kbrueck[at]uni-bremen.de Informatics M.Sc. |

Phillip Kehr pkehr[at]uni-bremen.de Informatics B.Sc. |

|---|

Perception

| Lennart Christian Heinbokel lenhei[at]uni-bremen.de Informatics M.Sc. |

Naser Azizi naser1[at]uni-bremen.de Informatics M.Sc. |

Sorin Arion sorin[at]uni-bremen.de Informatics M.Sc. |

|---|

Planning

| Felix Krause krause4[at]uni-bremen.de Informatics B.Sc. |

Luca Krohm luc_kro[at]uni-bremen.de Informatics B.Sc. |

Tim Alexander Rienits trienits[at]uni-bremen.de Informatics M.Sc. |

|---|

Methodology and implementation

Knowledge:

To fulfill complex tasks a robot needs knowledge and memory of its environment. While the robot acts in its world, it recognizes objects and manipulaties them through pick-and-place tasks. With the use of KnowRob, a belief state provides episodic memory of the robot's experience, recording the robot's memory of each cognitive activity. Via ontologies objects can be classified and put into context, which enables logical reasoning over the environment and intelligent decision making.

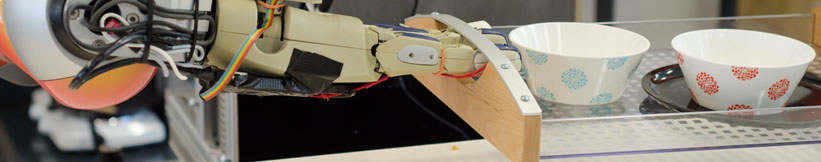

Manipulation:

For manipulation tasks in the environment, we use the open source motion planning framework Giskard.

It uses constraint and optimization based task space control to generate trajectories for the whole body of mobile manipulators.

Giskard offers interfaces to plan and execute motion goals and to modify its world model.

A selection of predefined basic motion goals can be arbitrarily combined to describe a motion.

If an environment model is present, such goals can also be defined on the environment, e.g., to open a door.

Simulation of the HSR while opening a human sized door. This gif is accelerated to 200% speed. This results with a real-time factor of approximately 0.46 to an effective speed of 92%.

Planning:

Planning is responsible for the high-level control and failure handling of the autonomous robot system by utilizing generic cognitive strategies. It combines the frameworks of perception, knowledge and manipulation within high-level plans written within the CRAM (Cognitive Robot Abstract Machine) system. CRAM enables the implementation of various recovery strategies for failures, as well as a lightweight simulation tool for prospection, allowing to simulate the potential outcome of the current plans and their respective parameters before executing it on the real robot, hereby increasing the success of the performed action by discarding faulty parameters in advance. CRAM also allows the high-level plans to be written generically in a way, so that he plans are robot-platform independent. The plan execution results can furthermore be recorded in order to be reasoned about in the future, adapting and increasing the success of upcoming plan performance.

Perception:

The perception framework has the task to process the visual data received by the robot's camera sensors and establish the communication between the high-level and visual perception. RoboKudo is an open source robotic perception framework based on the principles of unstructured information management.

The framework allows for the creation of perception systems that employ an ensembles of experts approach and treat perception as a question-answering problem.

Based on the queries issued to the system a perception plan is created consisting of a list of experts to be executed.

The perception experts generate object hypotheses, annotate these hypotheses and test and rank them in order to come up with the best possible interpretation of the data and generate the answer to the query. The methods provided by TUW are integrated as experts into the RoboKudo perception framework allowing the reasoning about the perception results and the communication with the high-level planning CRAM to close the perception-action loop.

Link to open source and research

- The Robot Household Marathon Experiment

- CRAM

- Know Rob 2.0

- RoboSherlock: Unstructured information processing for robot perception

- Manipulation Planning and Control for Shelf Replenishment

An open-source motion planning frame- work for mobile manipulators using constraint-based task space control with linear MPC.

By: Simon Stelter, Georg Bartels, and Michael Beetz.

In: 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS).

To be published by October 2022. IEEE. 2022.

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: