Differences

This shows you the differences between two versions of the page.

| Both sides previous revisionPrevious revisionNext revision | Previous revisionNext revisionBoth sides next revision | ||

| jobs [2017/01/24 07:19] – [Theses and Jobs] bartelsg | jobs [2019/08/12 11:51] – [Theses and Student Jobs] mareikep | ||

|---|---|---|---|

| Line 1: | Line 1: | ||

| ~~NOTOC~~ | ~~NOTOC~~ | ||

| - | =====Theses and Jobs===== | + | |

| + | =====Open researcher positions===== | ||

| + | |||

| + | =====Theses and Student | ||

| If you are looking for a bachelor/ | If you are looking for a bachelor/ | ||

| + | |||

| + | |||

| + | |||

| + | == Visualization support assistant (HiWi) == | ||

| + | |||

| + | Implementing a visualization web page for a project using a custom framework using python/ | ||

| + | |||

| + | Requirements: | ||

| + | * Good python programming skills | ||

| + | * Familiar javascript | ||

| + | * Experience web development is recommended | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| + | |||

| + | Contact: [[team: | ||

| + | |||

| + | |||

| + | == Knowledge-enabled PID Controller for 3D Hand Movements in Virtual Environments (BA/MA Thesis) == | ||

| + | |||

| + | Implementing a force-, velocity- and impulse-based PID controller for precise and responsive hand movements in a virtual environment. The virtual environment used in Unreal Engine in combination with Virtual Reality devices. The movements | ||

| + | of the human user will be mapped to the virtual hands, and controlled via the implemented PID controllers. | ||

| + | The controller should be able to dynamically tune itself depending on the executed actions (opening/ | ||

| + | |||

| + | Requirements: | ||

| + | * Good C++ programming skills | ||

| + | * Familiar with PID controllers and control theory | ||

| + | * Experience with simulators/ | ||

| + | * Experience with Unreal Engine | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| + | |||

| + | Contact: [[team: | ||

| < | < | ||

| Line 7: | Line 42: | ||

| Technical support for the group for Lisp and the CRAM framework. \\ | Technical support for the group for Lisp and the CRAM framework. \\ | ||

| - | 5 hours per week for up to 1 year (paid). | + | 8+ hours per week for up to 1 year (paid). |

| Requirements: | Requirements: | ||

| Line 16: | Line 51: | ||

| Contact: [[team: | Contact: [[team: | ||

| - | --> | + | --></ |

| - | </ | + | |

| + | < | ||

| + | == Mesh Editing / Mesh Segmentation/ | ||

| + | {{ : | ||

| - | == Integrating PR2 in the Unreal Game Engine Framework (BA)== | + | |

| - | {{ : | + | |

| + | Requirements: | ||

| + | * Good knowledge in 3D Modeling | ||

| + | * Familiar with Blender / Maya (or other) | ||

| + | |||

| + | Contact: [[team/ | ||

| + | --></ | ||

| + | |||

| + | < | ||

| + | == 3D Model / Material / Lightning Developer (Student Job / HiWi)== | ||

| + | {{ : | ||

| + | |||

| + | Developing and improving existing 3D models in Blender / Maya (or other). Importing the models in Unreal Engine, where the Materials and Lightning should be improved to be close as possible to realism. | ||

| + | |||

| + | Bonus: Working with state of the art 3D Scanners [[https:// | ||

| + | |||

| + | Requirements: | ||

| + | * Experience with Blender / Maya (or other) | ||

| + | * Knowledge of Unreal Engine material / lightning development | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| + | |||

| + | |||

| + | |||

| + | Contact: [[team: | ||

| + | --></ | ||

| + | |||

| + | < | ||

| + | == Integrating PR2 in the Unreal Game Engine Framework (BA/MA/HiWi)== | ||

| + | {{ : | ||

| Integrating the [[https:// | Integrating the [[https:// | ||

| Line 34: | Line 100: | ||

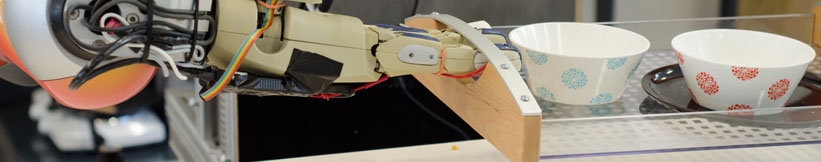

| - | == Realistic Grasping using Unreal Engine (BA/MA) == | + | == Realistic Grasping using Unreal Engine (BA/MA/HiWi) == |

| {{ : | {{ : | ||

| Line 51: | Line 117: | ||

| * Good knowledge of the Unreal Engine API. | * Good knowledge of the Unreal Engine API. | ||

| * Experience with skeletal control / animations / 3D models in Unreal Engine. | * Experience with skeletal control / animations / 3D models in Unreal Engine. | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| Contact: [[team/ | Contact: [[team/ | ||

| + | --></ | ||

| - | == Kitchen Activity Games in a Realistic Robotic Simulator | + | < |

| - | {{ :research:gz_env1.png?200|}} | + | == Unreal Engine Editor Developer |

| + | {{ :research:unreal_editor.png?150|}} | ||

| + | |||

| + | Creating new user interfaces (panel customization) for various internal plugins using the Unreal C++ framework [[https:// | ||

| - | Developing new activities and improving the current simulation framework done under the [[http:// | ||

| Requirements: | Requirements: | ||

| - | * Good programming skills in C/C++ | + | * Good C++ programming skills |

| - | * Basic physics/rendering engine knowledge | + | * Familiar with the [[https:// |

| - | * Gazebo simulator basic tutorials | + | * Familiar with Unreal Engine API |

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| Contact: [[team: | Contact: [[team: | ||

| Line 69: | Line 142: | ||

| + | == OpenEASE rendering in Unreal Engine (BA/MA Thesis, Student Job / HiWi)== | ||

| - | == Automated sensor calibration toolkit (BA/MA)== | ||

| - | Computer vision is an important part of autonomous robots. For robots the image sensors are the main source of information | + | Implmenting |

| - | The topic for this thesis is to develop an automated system for calibrating cameras, especially RGB-D cameras like the Kinect v2. | + | Requirements: |

| - | + | * Good C++ programming skills | |

| - | {{ : | + | * Familiar with Unreal Engine API |

| - | The system should: | + | * Familiar with HTML5 and JavaScript |

| - | * be independent of the camera type | + | * Familiar with the [[https:// |

| - | * estimate intrinsic and extrinsic parameters | + | * Familiar with basic ROS communication |

| - | * calibrate depth images (case of RGB-D) | + | * Familiar with version-control systems (git) |

| - | * integrate capabilities from Halcon | + | * Able to work independently with minimal supervision |

| - | * operate autonomously | + | |

| - | Requirements: | + | Contact: [[team:andrei_haidu|Andrei Haidu]] |

| - | * Good programming skills in Python and C/C++ | + | --></html> |

| - | * ROS, OpenCV | + | |

| - | + | ||

| - | [1] http:// | + | |

| - | + | ||

| - | Contact: [[team:alexis_maldonado|Alexis Maldonado]] and [[team: | + | |

| - | + | ||

| - | /* | + | |

| - | == On-the-fly 3D CAD model creation (MA)== | + | |

| - | + | ||

| - | Create models during runtime for unknown textured objets based on depth and color information. Track the object and update the model with more detailed information, | + | |

| - | + | ||

| - | Requirements: | + | |

| - | * Good programming skills in C/C++ | + | |

| - | * strong background in computer vision | + | |

| - | * ROS, OpenCV, PCL | + | |

| - | + | ||

| - | Contact: [[team: | + | |

| - | + | ||

| - | == Simulation of a robots belief state to support perception(MA) == | + | |

| - | + | ||

| - | Create a simulation environment that represents the robots current belief state and can be updated frequently. Use off-screen rendering to investigate the affordances these objects possess, in order to support segmentation, | + | |

| - | + | ||

| - | Requirements: | + | |

| - | * Good programming skills in C/C++ | + | |

| - | * strong background in computer vision | + | |

| - | * Gazebo, OpenCV, PCL | + | |

| - | + | ||

| - | Contact: [[team: | + | |

| - | */ | + | |

| - | + | ||

| - | == Multi-expert segmentation of cluttered and occluded scenes == | + | |

| - | + | ||

| - | Objects in a human environment are usually found in challenging scenes. They can be stacked upon eachother, touching or occluding, can be found in drawers, cupboards, refrigerators and so on. A personal robot assistant in order to execute a task, needs to detect these objects and recognize them. In this thesis a multi-modal approach to interpreting cluttered scenes is going to be investigated, | + | |

| - | + | ||

| - | Requirements: | + | |

| - | * Good programming skills in C/C++ | + | |

| - | * strong background in 3D vision | + | |

| - | * basic knowledge of ROS, OpenCV, PCL | + | |

| - | + | ||

| - | Contact: [[team: | + | |

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: