Differences

This shows you the differences between two versions of the page.

| Both sides previous revisionPrevious revisionNext revision | Previous revisionNext revisionBoth sides next revision | ||

| jobs [2015/09/08 12:24] – [Theses and Jobs] nyga | jobs [2023/06/22 05:59] – dkastens | ||

|---|---|---|---|

| Line 1: | Line 1: | ||

| ~~NOTOC~~ | ~~NOTOC~~ | ||

| - | =====Theses and Jobs===== | ||

| - | If you are looking for a bachelor/ | ||

| + | =====Open researcher positions===== | ||

| + | {{blog>: | ||

| - | == Kitchen Activity Games in a Realistic Robotic Simulator (BA/ | ||

| - | {{ : | ||

| - | Developing new activities and improving the current simulation framework done under the [[http://gazebosim.org/ | + | < |

| + | <div style=" | ||

| + | </html> | ||

| - | Requirements: | ||

| - | * Good programming skills in C/C++ | ||

| - | * Basic physics/ | ||

| - | * Gazebo simulator basic tutorials | ||

| - | Contact: [[team: | ||

| - | == Integrating Eye Tracking | + | =====Theses and Student Jobs===== |

| - | {{ : | + | If you are looking for a bachelor/ |

| + | |||

| + | |||

| + | < | ||

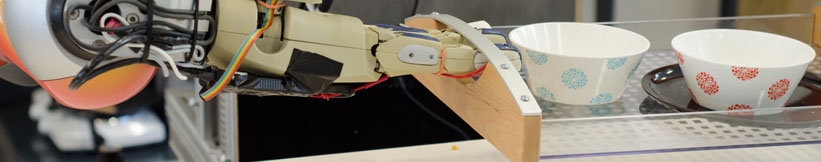

| + | == Physics-based grasping | ||

| - | Integrating the eye tracker in the [[http:// | + | Implementing physics-based grasping models in virtual environments, |

| + | using Manus VR. | ||

| Requirements: | Requirements: | ||

| - | * Good programming skills in C/C++ | + | * Good C++ programming skills |

| - | * Gazebo simulator basic tutorials | + | * Familiar with skeletal animations |

| + | * Experience with simulators/ | ||

| + | * Familiar with Unreal Engine API | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| Contact: [[team: | Contact: [[team: | ||

| + | --></ | ||

| - | == Hand Skeleton Tracking Using Two Leap Motion Devices (BA/MA)== | ||

| - | {{ : | ||

| - | Improving the skeletal tracking offered by the [[https:// | + | < |

| + | == Lisp / CRAM support assistant | ||

| - | The tracked hand can then be used as input for the Kitchen Activity Games framework. | + | Technical support |

| + | 8+ hours per week for up to 1 year (paid). | ||

| Requirements: | Requirements: | ||

| - | * Good programming skills in C/C++ | + | * Good programming skills in Common Lisp |

| + | * Basic ROS knowledge | ||

| - | Contact: [[team: | + | The student will be introduced to the CRAM framework at the beginning of the job, which is a robot programming framework written in Lisp. The student will then be responsible for assisting not familiar with the framework people, explaining them the parts they don't understand and pointing them to the relevant documentation sources. |

| - | == Fluid Simulation in Gazebo (BA/MA)== | + | Contact: [[team:gayane_kazhoyan|Gayane Kazhoyan]] |

| - | | + | --></ |

| - | [[http://gazebosim.org/|Gazebo]] currently only supports rigid body physics engines (ODE, Bullet etc.), however in some cases fluids are preferred in order to simulate as realistically as possible the given environment. | + | < |

| + | == Mesh Editing | ||

| + | {{ : | ||

| - | Currently there is an [[http://gazebosim.org/ | + | |

| + | |||

| + | Requirements: | ||

| + | * Good knowledge | ||

| + | * Familiar with Blender / Maya (or other) | ||

| + | |||

| + | Contact: | ||

| + | --></ | ||

| - | The computational method for the fluid simulation is SPH (Smoothed-particle Dynamics), however newer and better methods based on SPH are currently present | ||

| - | and should be implemented (PCISPH/ | ||

| - | The interaction between the fluid and the rigid objects is a naive one, the forces and torques are applied only from the particle collisions | + | < |

| + | == 3D Animation | ||

| + | {{ : | ||

| - | Another topic would be the visualization of the fluid, currently is done by rendering every particle. For the rendering engine [[http://www.ogre3d.org/ | + | Developing and improving existing or new 3D (static/skeletal) |

| + | models in Blender | ||

| + | models against Unreal Engine. | ||

| - | Here is a [[https://vimeo.com/104629835|video]] example of the current state of the fluid in Gazebo. | + | Bonus: Working with state of the art 3D Scanners |

| Requirements: | Requirements: | ||

| - | * Good programming skills in C/C++ | + | * Experience with Blender |

| - | * Interest in Fluid simulation | + | * Knowledge of Unreal Engine material / lightning development |

| - | * Basic physics/ | + | * Familiar with version-control systems (git) |

| - | * Gazebo simulator and Fluidix basic tutorials | + | * Able to work independently with minimal supervision |

| Contact: [[team: | Contact: [[team: | ||

| + | --></ | ||

| + | == Generating Comics about Everyday Experiences of a Robot (BA Thesis) == | ||

| - | == Automated sensor calibration toolkit (BA/MA)== | + | Summary: |

| + | * Query experience data from an existing database | ||

| + | * Retrieve situations of interest | ||

| + | * Recreate the scene in a 3D environment | ||

| + | * Apply a comic shader | ||

| + | * Find good camera position for moments of interest | ||

| + | * Generate a PDF summarizing the experiences as a comic strip | ||

| - | Computer vision is an important part of autonomous robots. For robots the image sensors are the main source of information of the surrounding world. Each camera is different, even if they are from the same production line. For computer vision, especially for robots manipulating their environment, | + | Contact: [[team: |

| - | The topic for this thesis is to develop an automated system for calibrating cameras, especially RGB-D cameras like the Kinect v2. | + | == Integration of novel objects into Digital Twin Knowledge Bases (MA Thesis) == |

| - | {{ : | + | In this thesis, the goal is to make a robotic system learn new objects automatically. |

| - | The system should: | + | The system should be able to generate |

| - | * be independent of the camera type | + | |

| - | * estimate intrinsic | + | |

| - | * calibrate depth images (case of RGB-D) | + | |

| - | * integrate capabilities from Halcon [1] | + | |

| - | * operate autonomously | + | |

| - | Requirements: | + | The focus of the thesis would be two-fold: |

| - | * Good programming skills in Python | + | * Develop methods to automatically infer the object class of new objects. This would include perceiving it with state of the art sensors, constructing a 3d model of it and then infer the object class from online information sources. |

| - | * ROS, OpenCV | + | * In the second step the system should also infer factual knowledge about the object from the internet and assert it into a robotic knowledgebase. Such knowledge could for example include the category of this product, typical object properties like its weight or typical location and much more. |

| - | [1] http:// | ||

| - | Contact: [[team: | + | Requirements: |

| + | * Knowledge about sensor data processing | ||

| + | * Interest in model construction from sensory data | ||

| + | * Work with KnowRob knowledge processing framework | ||

| - | == On-the-fly 3D CAD model creation (MA)== | ||

| - | Create models during runtime for unknown textured objets based on depth and color information. Track the object and update the model with more detailed information, | + | Contact: [[team: |

| - | Requirements: | + | == Integration von Nährwertangaben für Rezepte |

| - | * Good programming skills | + | |

| - | * strong background in computer vision | + | |

| - | * ROS, OpenCV, PCL | + | |

| - | Contact: [[team: | + | In dieser Arbeit soll eine bestehende Website mit Nährwertangaben und Zusatzfunktionalität zu Produkten um eine Nährwertangabe zu Rezepten erweitert werden. Es handelt sich um folgende website: productkg.informatik.uni-bremen.de |

| - | == Simulation of a robots belief state to support perception(MA) == | + | Die Aufgaben dazu sind: |

| + | * Vergleich von bestehenden Lösungen. | ||

| + | * Erweiterung einer Ontologie um Nährwertangaben. | ||

| + | * Erweiterung der Website. | ||

| - | Create a simulation environment that represents the robots current belief state and can be updated frequently. Use off-screen rendering to investigate the affordances these objects possess, in order to support segmentation, | ||

| - | Requirements: | + | Contact: [[team: |

| - | * Good programming skills in C/C++ | + | |

| - | * strong background in computer vision | + | |

| - | * Gazebo, OpenCV, PCL | + | |

| - | Contact: [[team: | ||

| - | == Multi-expert segmentation | + | < |

| + | == Development | ||

| + | In our research group, we focus on the development of modern robots that can make use of the potential of game engines. One particular research direction, is the combination of computer vision with game engines. | ||

| + | In this context, we are currently offering multiple Hiwi positions / student jobs for the following tasks: | ||

| + | * Software development to create Interfaces between ROS and Unreal Engine 4 (mainly C++) | ||

| + | * Software development for our Robot Perception framework [[http:// | ||

| - | Objects | + | Requirements: |

| + | * Experience | ||

| + | * Basic understanding of the ROS middleware and Linux. | ||

| + | The spoken language in this job is german | ||

| + | |||

| + | Contact: [[team: | ||

| + | --></ | ||

| + | |||

| + | == Game Engine Developer and 3D-Modelling | ||

| + | A recent development | ||

| + | In our research group, we focus on the development of modern robots that can make use of the potential of game engines. This requires | ||

| + | |||

| + | Therefore, we are currently offering | ||

| + | * Modelling of objects for the use in Unreal Engine 4. | ||

| + | * Creation of specific simulation aspects in Unreal Engine 4. For example the development of interactable objects. | ||

| + | |||

| + | Requirements: | ||

| + | * Knowledge of 3D-Modelling tools. Blender would be highly preferred. | ||

| + | * Experience in Game Engine development. Ideally Unreal Engine 4 and C++. | ||

| - | Requirements: | + | The spoken language |

| - | * Good programming skills | + | |

| - | * strong background in 3D vision | + | |

| - | * basic knowledge of ROS, OpenCV, PCL | + | |

| - | Contact: [[team:ferenc_balint-benczedi|Ferenc Balint-Benczedi]] | + | Contact: [[team:patrick_mania|Patrick Mania]] |

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: