Differences

This shows you the differences between two versions of the page.

| Both sides previous revisionPrevious revisionNext revision | Previous revisionNext revisionBoth sides next revision | ||

| jobs [2015/03/23 13:18] – raider | jobs [2021/04/16 08:41] – [Theses and Student Jobs] kording | ||

|---|---|---|---|

| Line 1: | Line 1: | ||

| ~~NOTOC~~ | ~~NOTOC~~ | ||

| - | =====Theses and Jobs===== | + | |

| + | =====Open researcher positions===== | ||

| + | |||

| + | ====Digital Twin Knowledge Base for submarine robot inspection/ | ||

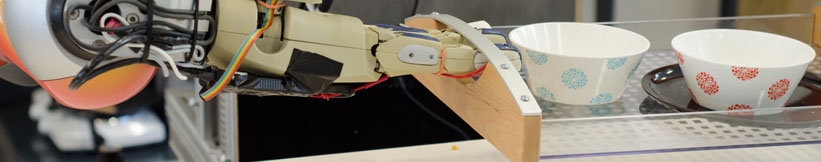

| + | The Institute for Artificial Intelligence (IAI) investigates methods for cognition-enabled robot control. The research is at the intersection of robotics and Artificial Intelligence and includes methods for intelligent perception, dexterous object manipulation, | ||

| + | |||

| + | As a researcher of the IAI, you actively research by applying and extending the elaborated methods and tools of the IAI (e.g., CRAM, KnowRoB, and openEASE) to the uncertainties of limited resources, computation, | ||

| + | |||

| + | **Prerequisites: | ||

| + | * Digital Twins | ||

| + | * Knowledge Representation | ||

| + | * Data structures | ||

| + | * Data Stream Representation. | ||

| + | |||

| + | **Hiring institution: | ||

| + | |||

| + | **PhD Enrollment: | ||

| + | |||

| + | The PhD examination acceptance requires a “Certificate of Equivalence for Foreign Vocational Qualifications”. More information available at [[https:// | ||

| + | |||

| + | **Duration of the project:** 36 months | ||

| + | |||

| + | **Main Academic Supervisor: | ||

| + | |||

| + | **Co-supervisors: | ||

| + | =====Theses and Student | ||

| If you are looking for a bachelor/ | If you are looking for a bachelor/ | ||

| - | == GPU-based Parallelization of Numerical Optimization Techniques (BA/ | ||

| - | In the field of Machine Learning, numerical optimization techniques play a focal role. However, as models grow larger, traditional implementations on single-core CPUs suffer from sequential execution causing | + | < |

| + | == Knowledge-enabled PID Controller for 3D Hand Movements in Virtual Environments (BA/MA Thesis) == | ||

| + | |||

| + | Implementing | ||

| + | of the human user will be mapped | ||

| + | The controller should | ||

| Requirements: | Requirements: | ||

| - | | + | * Good C++ programming skills |

| - | | + | * Familiar with PID controllers |

| + | * Experience with simulators/physics-/ | ||

| + | * Familiar with Unreal Engine API | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| - | Contact: [[team:daniel_nyga|Daniel Nyga]] | + | Contact: [[team:andrei_haidu|Andrei Haidu]] |

| + | --></ | ||

| - | == Online Learning of Markov Logic Networks for Natural-Language Understanding (MA)== | ||

| - | Markov Logic Networks | + | |

| + | < | ||

| + | == Natural Physics-based Grasping in Virtual Environments | ||

| + | |||

| + | Implementing physics-based grasping | ||

| + | from various devices such as Manus VR or Valve Index. | ||

| Requirements: | Requirements: | ||

| - | * Experience in Machine Learning. | + | * Good C++ programming skills |

| - | * Experience with statistical relational learning | + | * Familiar with skeletal animations |

| - | * Good programming skills in Python. | + | * Experience with simulators/ |

| + | * Familiar with Unreal Engine API | ||

| + | * Familiar with version-control systems | ||

| + | * Able to work independently with minimal supervision | ||

| - | Contact: [[team:daniel_nyga|Daniel Nyga]] | + | Contact: [[team:andrei_haidu|Andrei Haidu]] |

| + | --></ | ||

| - | ==HiWi-Position: Knowledge Representation & Language Understanding for Intelligent Robots== | + | < |

| + | == Lisp / CRAM support assistant (HiWi) == | ||

| + | |||

| + | Technical support for the group for Lisp and the CRAM framework. \\ | ||

| + | 8+ hours per week for up to 1 year (paid). | ||

| + | |||

| + | Requirements: | ||

| + | * Good programming skills in Common Lisp | ||

| + | * Basic ROS knowledge | ||

| - | In the context | + | The student will be introduced to the CRAM framework at the beginning |

| - | are investigating methods for combining multimodal sources of knowledge (e.g. video, natural-language recipes or computer games), in order to enable mobile robots to autonomously acquire new high level skills like cooking meals or straightening up rooms. | + | |

| - | The Institute for Artificial Intelligence is hiring a student researcher for the | + | Contact: [[team: |

| - | development and the integration of probabilistic methods in AI, which enable intelligent robots to understand, interpret and execute natural-language instructions from recipes from the World Wide Web. | + | --></ |

| - | This HiWi-Position can serve as a starting point for future Bachelor' | + | < |

| + | == Mesh Editing / Mesh Segmentation/ | ||

| + | {{ : | ||

| - | Tasks: | + | Editing and cutting a human mesh into different parts in Blender / Maya (or other). |

| - | * Implementation of an interface to the Robot Operating System | + | |

| - | * Linkage of the knowledge base to the executive of the robot. | + | |

| - | * Support for the scientific staff in extending and integrating components onto the robot platform PR2. | + | |

| Requirements: | Requirements: | ||

| - | * Studies | + | * Good knowledge |

| - | * Basic skills in Artificial Intelligence | + | * Familiar with Blender / Maya (or other) |

| - | * Optional: basic skills in Probability Theory | + | |

| - | * Optional: basic skills in Machine Learning | + | |

| - | * Good programming skills in Python and Java | + | |

| - | Hours: 10-20 h/week | + | Contact: [[team/ |

| + | --></html> | ||

| - | Contact: [[team: | ||

| - | [1] www.robohow.eu\\ | ||

| - | [2] http:// | ||

| + | < | ||

| + | == 3D animation and model developer (Student Job / HiWi)== | ||

| + | {{ : | ||

| - | == Kitchen Activity Games in a Realistic Robotic Simulator | + | Developing and improving existing or new 3D (static/skeletal) |

| - | {{ : | + | models in Blender |

| + | models against Unreal Engine. | ||

| - | Developing new activities and improving the current simulation framework done under the [[http://gazebosim.org/|Gazebo]] robotic simulator. Creating a custom GUI for the game, in order to launch | + | Bonus: Working with state of the art 3D Scanners |

| Requirements: | Requirements: | ||

| - | * Good programming skills in C/C++ | + | * Experience with Blender |

| - | * Basic physics/rendering engine knowledge | + | * Knowledge of Unreal Engine material |

| - | * Gazebo simulator basic tutorials | + | * Familiar with version-control systems (git) |

| + | * Able to work independently with minimal supervision | ||

| Contact: [[team: | Contact: [[team: | ||

| + | --></ | ||

| - | == Integrating Eye Tracking in the Kitchen Activity Games (BA/MA)== | ||

| - | {{ : | ||

| - | Integrating | + | < |

| + | == Integrating | ||

| + | {{ : | ||

| + | |||

| + | Integrating | ||

| Requirements: | Requirements: | ||

| * Good programming skills in C/C++ | * Good programming skills in C/C++ | ||

| - | * Gazebo simulator | + | * Basic physics/ |

| + | * Basic ROS knowledge | ||

| + | * UE4 basic tutorials | ||

| Contact: [[team: | Contact: [[team: | ||

| - | == Hand Skeleton Tracking Using Two Leap Motion Devices (BA/MA)== | ||

| - | {{ : | ||

| - | Improving the skeletal tracking offered by the [[https://developer.leapmotion.com/ | + | == Realistic Grasping using Unreal Engine (BA/MA/HiWi) == |

| - | The tracked hand can then be used as input for the Kitchen Activity Games framework. | + | {{ : |

| - | Requirements: | + | The objective of the project is to implement var- |

| - | * Good programming skills | + | ious human-like grasping approaches |

| - | Contact: [[team: | + | The game consist of a household environment where a user has to execute various given tasks, such as cooking a dish, setting the table, cleaning the dishes etc. The interaction is done using various sensors to map the users hands onto the virtual hands in the game. |

| - | == Fluid Simulation in Gazebo | + | In order to improve the ease of manipulating objects the user should |

| - | {{ :research: | + | be able to switch during runtime the type of grasp (pinch, power |

| + | grasp, precision grip etc.) he/she would like to use. | ||

| + | |||

| + | Requirements: | ||

| + | * Good programming skills in C++ | ||

| + | * Good knowledge of the Unreal Engine API. | ||

| + | * Experience with skeletal control / animations / 3D models in Unreal Engine. | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| - | [[http:// | ||

| - | Currently there is an [[http:// | + | Contact: |

| + | --></html> | ||

| - | The computational method for the fluid simulation is SPH (Smoothed-particle Dynamics), however newer and better methods based on SPH are currently present | + | < |

| - | and should be implemented | + | == Unreal Engine Editor Developer |

| + | {{ : | ||

| - | The interaction between the fluid and the rigid objects is a naive one, the forces and torques are applied only from the particle collisions | + | Creating new user interfaces |

| - | Another topic would be the visualization of the fluid, currently is done by rendering every particle. For the rendering engine [[http:// | ||

| - | Here is a [[https://vimeo.com/104629835|video]] example of the current state of the fluid in Gazebo. | + | Requirements: |

| + | * Good C++ programming skills | ||

| + | * Familiar with the [[https://docs.unrealengine.com/latest/ | ||

| + | * Familiar with Unreal Engine API | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| + | |||

| + | Contact: [[team: | ||

| + | |||

| + | |||

| + | |||

| + | == OpenEASE rendering in Unreal Engine (BA/MA Thesis, Student Job / HiWi)== | ||

| + | |||

| + | |||

| + | Implmenting | ||

| Requirements: | Requirements: | ||

| - | * Good programming skills in C/C++ | + | * Good C++ programming skills |

| - | * Interest in Fluid simulation | + | * Familiar with Unreal Engine API |

| - | * Basic physics/rendering engine knowledge | + | * Familiar with HTML5 and JavaScript |

| - | * Gazebo simulator and Fluidix | + | * Familiar with the [[https:// |

| + | * Familiar with basic ROS communication | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| Contact: [[team: | Contact: [[team: | ||

| + | < | ||

| + | == Knowledge-enabled PID Controller for 3D Hand Movements in Virtual Environments (BA/MA Thesis) == | ||

| - | == Automated sensor calibration toolkit | + | Implementing a force-, velocity- and impulse-based PID controller for precise and responsive hand movements in a virtual environment. The virtual environment used in Unreal Engine in combination with Virtual Reality devices. The movements |

| + | of the human user will be mapped to the virtual hands, and controlled via the implemented PID controllers. | ||

| + | The controller should be able to dynamically tune itself depending on the executed actions | ||

| - | Computer vision is an important part of autonomous robots. For robots the image sensors are the main source of information of the surrounding world. Each camera is different, even if they are from the same production line. For computer vision, especially for robots manipulating their environment, | + | Requirements: |

| + | * Good C++ programming skills | ||

| + | * Familiar with PID controllers | ||

| + | * Experience with simulators/ | ||

| + | * Familiar with Unreal Engine API | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| - | The topic for this thesis is to develop an automated system for calibrating cameras, especially RGB-D cameras like the Kinect v2. | + | Contact: [[team: |

| + | --></ | ||

| - | {{ : | + | == Sprachgesteuertes Leitsystem |

| - | The system should: | + | |

| - | * be independent of the camera type | + | |

| - | * estimate intrinsic and extrinsic parameters | + | |

| - | * calibrate depth images | + | |

| - | * integrate capabilities from Halcon [1] | + | |

| - | * operate autonomously | + | |

| - | Requirements: | + | Weiterentwicklung eines sprachgesteuerten Leitsystems zu gesuchten Produkten im Einzelhandel für Smartphones (Android) basierend auf Ankerpunkten und einer Anbindung an Wissensgraphen. |

| - | * Good programming skills in Python and C/C++ | + | Dabei wird auf bestehende Apps aufgebaut. |

| - | * ROS, OpenCV | + | |

| - | [1] http:// | + | Aufgaben: |

| + | * App Entwicklung mit der Unity game engine und Flutter | ||

| + | * Erweiterung der Sprachsteuerung | ||

| + | * Arbeit mit Wissensrepräsentation und Wissensgraphen für die Sprachsteuerung | ||

| - | Contact: [[team: | ||

| - | == On-the-fly 3D CAD model creation (MA)== | + | Contact: [[team: |

| - | Create models during runtime for unknown textured objets based on depth and color information. Track the object and update the model with more detailed information, | + | == Sprachauswahl für Shopping Assistenten (BA Thesis) == |

| - | Requirements: | + | Weiterentwicklung eines Produktinformationssystems im Einzelhandel für Smartphones (Android) um eine Sprachauswahl. Anzeige der jeweiligen Informationen |

| - | * Good programming skills in C/C++ | + | Dabei wird auf bestehende Apps aufgebaut. |

| - | * strong background | + | |

| - | * ROS, OpenCV, PCL | + | |

| - | Contact: [[team: | + | Aufgaben: |

| + | * App Entwicklung mit der Unity game engine und Flutter | ||

| + | * Erweiterung der App um eine Sprachauswahl | ||

| + | * Arbeit mit Wissensrepräsentation und Wissensgraphen für Sprachmodellierung | ||

| - | == Simulation of a robots belief state to support perception(MA) == | ||

| - | Create a simulation environment that represents the robots current belief state and can be updated frequently. Use off-screen rendering to investigate the affordances these objects possess, in order to support segmentation, | + | Contact: [[team: |

| - | Requirements: | + | == Situational awareness |

| - | * Good programming skills | + | |

| - | * strong background in computer vision | + | |

| - | * Gazebo, OpenCV, PCL | + | |

| - | Contact: [[team: | + | This is a knowledge representation topic including knowledge graphs. The idea is so link external Web-knowledge to an existing knowledge framework in order to include situational awareness so that a robot acting in a household environment can infer what an object is used for in a given situation. |

| - | == Multi-expert segmentation of cluttered and occluded scenes == | + | A result would be that a spoon next to a bowl with cereal would be used for eating while a spoon on a stove next to a pot would be used for stirring. |

| - | Objects in a human environment are usually found in challenging scenes. They can be stacked upon eachother, touching or occluding, can be found in drawers, cupboards, refrigerators and so on. A personal robot assistant in order to execute a task, needs to detect these objects | + | requirements: |

| + | * Work with KnowRob knowledge processing framework | ||

| + | * Work with knowledge graphs and Linked Data to create | ||

| + | * Implement reasoning about situations (based | ||

| + | |||

| + | |||

| + | Contact: [[team: | ||

| - | Requirements: | ||

| - | * Good programming skills in C/C++ | ||

| - | * strong background in 3D vision | ||

| - | * basic knowledge of ROS, OpenCV, PCL | ||

| - | Contact: [[team: | ||

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: