Differences

This shows you the differences between two versions of the page.

| Both sides previous revisionPrevious revisionNext revision | Previous revisionNext revisionBoth sides next revision | ||

| jobs [2014/08/29 11:12] – [Theses and Jobs] ahaidu | jobs [2019/08/12 11:54] – [Theses and Student Jobs] mareikep | ||

|---|---|---|---|

| Line 1: | Line 1: | ||

| ~~NOTOC~~ | ~~NOTOC~~ | ||

| - | =====Theses and Jobs===== | + | |

| + | =====Open researcher positions===== | ||

| + | |||

| + | =====Theses and Student | ||

| If you are looking for a bachelor/ | If you are looking for a bachelor/ | ||

| - | == GPU-based Parallelization of Numerical Optimization Techniques | + | == Visualization support assistant |

| - | In the field of Machine Learning, numerical optimization techniques play a focal role. However, as models grow larger, traditional implementations on single-core CPUs suffer from sequential execution causing | + | Implementing |

| Requirements: | Requirements: | ||

| - | | + | * Good python |

| - | | + | * Familiar with Javascript |

| + | * Experience web development is recommended | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| - | Contact: [[team:daniel_nyga|Daniel Nyga]] | + | Contact: [[team:mareike_picklum|Mareike Picklum]] |

| - | == Online Learning of Markov Logic Networks for Natural-Language Understanding (MA)== | ||

| - | Markov Logic Networks | + | == Knowledge-enabled PID Controller for 3D Hand Movements in Virtual Environments |

| + | |||

| + | Implementing a force-, velocity- and impulse-based PID controller for precise and responsive hand movements in a virtual environment. The virtual environment used in Unreal Engine in combination with Virtual Reality devices. The movements | ||

| + | of the human user will be mapped | ||

| + | The controller should be able to dynamically tune itself depending on the executed actions (opening/ | ||

| Requirements: | Requirements: | ||

| - | * Experience | + | |

| - | * Experience with statistical relational learning | + | * Familiar with PID controllers and control theory |

| - | * Good programming skills in Python. | + | |

| + | * Experience with Unreal Engine | ||

| + | * Familiar with version-control systems | ||

| + | * Able to work independently with minimal supervision | ||

| - | Contact: [[team:daniel_nyga|Daniel Nyga]] | + | Contact: [[team:andrei_haidu|Andrei Haidu]] |

| + | < | ||

| + | == Lisp / CRAM support assistant (HiWi) == | ||

| - | ==HiWi-Position: | + | Technical support |

| + | 8+ hours per week for up to 1 year (paid). | ||

| - | In the context | + | Requirements: |

| - | are investigating methods for combining multimodal sources of knowledge (e.g. video, natural-language recipes or computer games), in order to enable mobile robots to autonomously acquire new high level skills like cooking meals or straightening up rooms. | + | * Good programming skills in Common Lisp |

| + | * Basic ROS knowledge | ||

| + | |||

| + | The student will be introduced to the CRAM framework at the beginning | ||

| - | The Institute for Artificial Intelligence is hiring a student researcher for the | + | Contact: [[team: |

| - | development and the integration of probabilistic methods in AI, which enable intelligent robots to understand, interpret and execute natural-language instructions from recipes from the World Wide Web. | + | --></ |

| - | This HiWi-Position can serve as a starting point for future Bachelor' | + | < |

| + | == Mesh Editing / Mesh Segmentation/ | ||

| + | {{ : | ||

| - | Tasks: | + | Editing and cutting a human mesh into different parts in Blender / Maya (or other). |

| - | * Implementation of an interface to the Robot Operating System | + | |

| - | * Linkage of the knowledge base to the executive of the robot. | + | |

| - | * Support for the scientific staff in extending and integrating components onto the robot platform PR2. | + | |

| Requirements: | Requirements: | ||

| - | * Studies | + | * Good knowledge |

| - | * Basic skills in Artificial Intelligence | + | * Familiar with Blender / Maya (or other) |

| - | * Optional: basic skills in Probability Theory | + | |

| - | * Optional: basic skills in Machine Learning | + | |

| - | * Good programming skills in Python and Java | + | |

| - | Hours: 10-20 h/week | + | Contact: [[team/ |

| + | --></html> | ||

| - | Contact: [[team:daniel_nyga|Daniel Nyga]] | + | < |

| + | == 3D Model / Material / Lightning Developer (Student Job / HiWi)== | ||

| + | | ||

| - | [1] www.robohow.eu\\ | + | Developing and improving existing 3D models in Blender |

| - | [2] http://www.youtube.com/ | + | |

| + | Bonus: Working with state of the art 3D Scanners [[https:// | ||

| - | == Depth-Adaptive Superpixels (BA/MA)== | + | Requirements: |

| - | | + | * Experience with Blender / Maya (or other) |

| - | We are currently investigating a new set of sensors | + | * Knowledge |

| + | * Familiar | ||

| + | * Able to work independently with minimal supervision | ||

| - | Since the current implementation of DASP is not very performant for high resolution images, there are several options for doing a project in this field like reimplementing DASP using CUDA, investigating how thermal data can be integrated, ... | ||

| - | Requirements: | ||

| - | * Basic knowledge of image processing | ||

| - | * Good programming skills in C/C++. | ||

| - | * Experience with CUDA is helpful | ||

| - | Contact: [[team:jan-hendrik_worch|Jan-Hendrik Worch]] | + | Contact: [[team:andrei_haidu|Andrei Haidu]] |

| + | --></ | ||

| + | < | ||

| + | == Integrating PR2 in the Unreal Game Engine Framework (BA/ | ||

| + | {{ : | ||

| - | == Physical Simulation of Humans (BA/MA)== | + | Integrating the [[https:// |

| - | | + | |

| - | + | ||

| - | For tracking people, | + | |

| Requirements: | Requirements: | ||

| * Good programming skills in C/C++ | * Good programming skills in C/C++ | ||

| - | * Optional: Experience in working with physics | + | * Basic physics/rendering engine knowledge |

| + | * Basic ROS knowledge | ||

| + | * UE4 basic tutorials | ||

| - | Contact: [[team:jan-hendrik_worch|Jan-Hendrik Worch]] | + | Contact: [[team:andrei_haidu|Andrei Haidu]] |

| - | == Kitchen Activity Games in a Realistic Robotic Simulator (BA/ | ||

| - | {{ : | ||

| - | Developing new activities and improving the current simulation framework done under the [[http://gazebosim.org/ | + | == Realistic Grasping using Unreal Engine (BA/MA/HiWi) == |

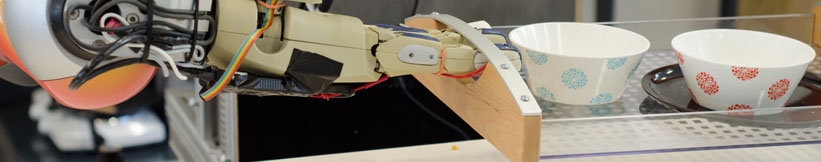

| - | Requirements: | + | {{ : |

| - | * Good programming skills in C/C++ | + | |

| - | * Basic physics/ | + | |

| - | * Gazebo simulator basic tutorials | + | |

| - | Contact: | + | The objective of the project is to implement var- |

| + | ious human-like grasping approaches in a game developed using [[https:// | ||

| - | == Integrating Eye Tracking | + | The game consist of a household environment where a user has to execute various given tasks, such as cooking a dish, setting the table, cleaning the dishes etc. The interaction is done using various sensors to map the users hands onto the virtual hands in the game. |

| - | {{ : | + | |

| - | Integrating | + | In order to improve |

| + | be able to switch | ||

| + | grasp, precision grip etc.) he/she would like to use. | ||

| + | |||

| + | Requirements: | ||

| + | * Good programming skills in C++ | ||

| + | * Good knowledge of the Unreal Engine API. | ||

| + | * Experience with skeletal control / animations / 3D models in Unreal Engine. | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| - | Requirements: | ||

| - | * Good programming skills in C/C++ | ||

| - | * Gazebo simulator basic tutorials | ||

| - | Contact: [[team:andrei_haidu|Andrei Haidu]] | + | Contact: [[team/andrei_haidu|Andrei Haidu]] |

| + | --></ | ||

| - | == Hand Skeleton Tracking Using Two Leap Motion Devices | + | < |

| - | {{ :research:leap_motion.jpg?200|}} | + | == Unreal Engine Editor Developer |

| + | {{ :research:unreal_editor.png?150|}} | ||

| - | Improving the skeletal tracking offered by the [[https://developer.leapmotion.com/|Leap Motion SDK]], by using two devices (one tracking vertically the other horizontally) and switching between them to the one that has the best current view of the hand. | + | Creating new user interfaces (panel customization) for various internal plugins using the Unreal C++ framework |

| - | The tracked hand can then be used as input for the Kitchen Activity Games framework. | ||

| Requirements: | Requirements: | ||

| - | * Good programming skills in C/C++ | + | * Good C++ programming skills |

| + | * Familiar with the [[https:// | ||

| + | * Familiar with Unreal Engine API | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| Contact: [[team: | Contact: [[team: | ||

| - | == Fluid Simulation in Gazebo (BA/MA)== | ||

| - | {{ : | ||

| - | [[http:// | ||

| - | Currently there is an [[http:// | + | == OpenEASE rendering |

| - | The computational method for the fluid simulation is SPH (Smoothed-particle Dynamics), however newer and better methods based on SPH are currently present | ||

| - | and should be implemented (PCISPH/ | ||

| - | The interaction between | + | Implmenting |

| - | + | ||

| - | Another topic would be the visualization | + | |

| - | + | ||

| - | Here is a [[https:// | + | |

| Requirements: | Requirements: | ||

| - | * Good programming skills in C/C++ | + | * Good C++ programming skills |

| - | * Interest in Fluid simulation | + | * Familiar with Unreal Engine API |

| - | * Basic physics/rendering engine knowledge | + | * Familiar with HTML5 and JavaScript |

| - | * Gazebo simulator and Fluidix | + | * Familiar with the [[https:// |

| + | * Familiar with basic ROS communication | ||

| + | * Familiar with version-control systems (git) | ||

| + | * Able to work independently with minimal supervision | ||

| Contact: [[team: | Contact: [[team: | ||

| + | --></ | ||

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: