Differences

This shows you the differences between two versions of the page.

| Both sides previous revisionPrevious revisionNext revision | Previous revisionNext revisionBoth sides next revision | ||

| jobs [2014/08/29 11:12] – [Theses and Jobs] ahaidu | jobs [2016/03/03 07:58] – [Theses and Jobs] ahaidu | ||

|---|---|---|---|

| Line 3: | Line 3: | ||

| If you are looking for a bachelor/ | If you are looking for a bachelor/ | ||

| + | == Lisp / CRAM support assistant (HiWi) == | ||

| - | + | Technical support for the group for Lisp and the CRAM framework. \\ | |

| - | == GPU-based Parallelization of Numerical Optimization Techniques (BA/ | + | 5 hours per week for up to 1 year (paid). |

| - | + | ||

| - | In the field of Machine Learning, numerical optimization techniques play a focal role. However, as models grow larger, traditional implementations on single-core CPUs suffer from sequential execution causing a severe slow-down. In this thesis, state-of-the-art GPU frameworks | + | |

| Requirements: | Requirements: | ||

| - | | + | * Good programming skills in Common Lisp |

| - | | + | * Basic ROS knowledge |

| - | + | ||

| - | Contact: [[team: | + | |

| - | + | ||

| - | == Online Learning of Markov Logic Networks for Natural-Language Understanding (MA)== | + | |

| - | + | ||

| - | Markov Logic Networks (MLNs) combine the expressive power of first-order logic and probabilistic graphical models. In the past, they have been successfully applied to the problem of semantically interpreting and completing natural-language instructions from the web. State-of-the-art learning techniques mostly operate in batch mode, i.e. all training instances need to be known in the beginning of the learning process. In context of this thesis, online learning methods for MLNs are to be investigated, | + | |

| - | + | ||

| - | Requirements: | + | |

| - | * Experience in Machine Learning. | + | |

| - | * Experience with statistical relational learning (e.g. MLNs) is helpful. | + | |

| - | * Good programming skills in Python. | + | |

| - | + | ||

| - | Contact: [[team: | + | |

| - | ==HiWi-Position: | + | The student will be introduced to the CRAM framework at the beginning of the job, which is a robot programming framework written in Lisp. The student will then be responsible |

| - | In the context of the European research project RoboHow.Cog | + | Contact: |

| - | are investigating methods for combining multimodal sources of knowledge (e.g. video, natural-language recipes or computer games), in order to enable mobile robots to autonomously acquire new high level skills like cooking meals or straightening up rooms. | + | |

| - | The Institute for Artificial Intelligence is hiring a student researcher for the | ||

| - | development and the integration of probabilistic methods in AI, which enable intelligent robots to understand, interpret and execute natural-language instructions from recipes from the World Wide Web. | ||

| - | This HiWi-Position can serve as a starting point for future Bachelor' | + | == Integrating PR2 in the Unreal Game Engine Framework (BA)== |

| + | {{ : | ||

| - | Tasks: | + | Integrating |

| - | * Implementation of an interface to the Robot Operating System (ROS). | + | |

| - | * Linkage of the knowledge base to the executive of the robot. | + | |

| - | * Support for the scientific staff in extending and integrating components onto the robot platform PR2. | + | |

| Requirements: | Requirements: | ||

| - | * Studies | + | * Good programming skills |

| - | * Basic skills in Artificial Intelligence | + | * Basic physics/ |

| - | * Optional: basic skills in Probability Theory | + | * Basic ROS knowledge |

| - | * Optional: | + | * UE4 basic tutorials |

| - | * Good programming skills in Python and Java | + | |

| - | Hours: 10-20 h/week | + | Contact: [[team: |

| - | Contact: [[team: | ||

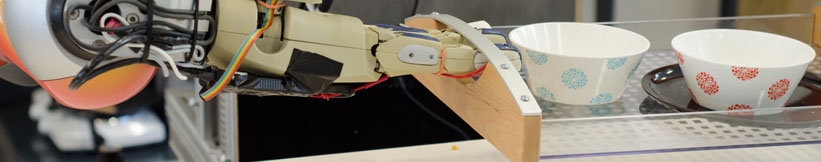

| - | [1] www.robohow.eu\\ | + | == Realistic Grasping using Unreal Engine (BA/MA) == |

| - | [2] http:// | + | |

| + | {{ : | ||

| - | == Depth-Adaptive Superpixels (BA/MA)== | + | The objective of the project is to implement var- |

| - | {{ : | + | ious human-like grasping approaches in a game developed using [[https:// |

| - | We are currently investigating a new set of sensors (RGB-D-T), which is a combination of a kinect with a thermal image camera. Within this project we want to enhance the Depth-Adaptive Superpixels (DASP) to make use of the thermal sensor data. Depth-Adaptive Superpixels oversegment an image taking into account the depth value of each pixel. | + | |

| - | Since the current implementation | + | The game consist |

| - | Requirements: | + | In order to improve the ease of manipulating objects the user should |

| - | * Basic knowledge of image processing | + | be able to switch during runtime the type of grasp (pinch, power |

| - | * Good programming skills in C/C++. | + | grasp, precision grip etc.) he/she would like to use. |

| - | * Experience with CUDA is helpful | + | |

| + | Requirements: | ||

| + | * Good programming skills in C++ | ||

| + | * Good knowledge of the Unreal Engine API. | ||

| + | * Experience with skeletal control / animations / 3D models in Unreal Engine. | ||

| - | Contact: [[team: | ||

| - | |||

| - | |||

| - | == Physical Simulation of Humans (BA/MA)== | ||

| - | {{ : | ||

| - | |||

| - | For tracking people, the use of particle filters is a common approach. However, the quality of those filters heavily depends on the way particles are spread. In this thesis, a library for the physical simulation of a human model is to be implemented. | ||

| - | |||

| - | Requirements: | ||

| - | * Good programming skills in C/C++ | ||

| - | * Optional: Experience in working with physics libraries such as Bullet | ||

| - | Contact: [[team: | + | Contact: [[team/ |

| - | == Kitchen Activity Games in a Realistic Robotic Simulator (BA/MA/HiWi)== | + | == Kitchen Activity Games in a Realistic Robotic Simulator (BA/MA)== |

| {{ : | {{ : | ||

| Line 94: | Line 64: | ||

| Contact: [[team: | Contact: [[team: | ||

| - | == Integrating Eye Tracking in the Kitchen Activity Games (BA/MA)== | ||

| - | {{ : | ||

| - | Integrating the eye tracker in the [[http:// | ||

| - | Requirements: | ||

| - | * Good programming skills in C/C++ | ||

| - | * Gazebo simulator basic tutorials | ||

| - | Contact: [[team: | + | == Automated sensor calibration toolkit (BA/MA)== |

| - | == Hand Skeleton Tracking Using Two Leap Motion Devices (BA/MA)== | + | Computer vision is an important part of autonomous robots. For robots the image sensors are the main source of information of the surrounding world. Each camera is different, even if they are from the same production line. For computer vision, especially for robots manipulating their environment, |

| - | {{ : | + | |

| - | Improving the skeletal tracking offered by the [[https:// | + | The topic for this thesis is to develop an automated system for calibrating cameras, especially RGB-D cameras like the Kinect v2. |

| - | The tracked hand can then be used as input for the Kitchen Activity Games framework. | + | {{ : |

| + | The system should: | ||

| + | * be independent of the camera type | ||

| + | * estimate intrinsic and extrinsic parameters | ||

| + | * calibrate depth images (case of RGB-D) | ||

| + | * integrate capabilities from Halcon [1] | ||

| + | * operate autonomously | ||

| - | Requirements: | + | Requirements: |

| - | * Good programming skills in C/C++ | + | * Good programming skills in Python and C/C++ |

| + | * ROS, OpenCV | ||

| - | Contact: | + | [1] http:// |

| - | == Fluid Simulation in Gazebo (BA/MA)== | + | Contact: [[team:alexis_maldonado|Alexis Maldonado]] and [[team: |

| - | | + | |

| - | [[http:// | + | == On-the-fly 3D CAD model creation |

| - | Currently there is an [[http:// | + | Create models during runtime for unknown textured objets based on depth and color information. Track the object and update the model with more detailed information, |

| - | The computational method for the fluid simulation is SPH (Smoothed-particle Dynamics), however newer and better methods based on SPH are currently present | + | Requirements: |

| - | and should be implemented (PCISPH/IISPH). | + | * Good programming skills in C/C++ |

| + | * strong background in computer vision | ||

| + | * ROS, OpenCV, PCL | ||

| - | The interaction between the fluid and the rigid objects is a naive one, the forces and torques are applied only from the particle collisions (not taking into account pressure and other forces). | + | Contact: [[team: |

| - | Another topic would be the visualization | + | == Simulation |

| - | Here is a [[https:// | + | Create |

| - | Requirements: | + | Requirements: |

| * Good programming skills in C/C++ | * Good programming skills in C/C++ | ||

| - | * Interest | + | * strong background |

| - | * Basic physics/rendering engine knowledge | + | * Gazebo, OpenCV, PCL |

| - | * Gazebo simulator and Fluidix | + | |

| + | Contact: [[team: | ||

| + | |||

| + | == Multi-expert segmentation of cluttered and occluded scenes == | ||

| + | |||

| + | Objects in a human environment are usually found in challenging scenes. They can be stacked upon eachother, touching or occluding, can be found in drawers, cupboards, refrigerators and so on. A personal robot assistant in order to execute a task, needs to detect these objects and recognize them. In this thesis a multi-modal approach to interpreting cluttered scenes is going to be investigated, | ||

| + | |||

| + | Requirements: | ||

| + | * Good programming skills in C/C++ | ||

| + | * strong background in 3D vision | ||

| + | * basic knowledge of ROS, OpenCV, PCL | ||

| + | |||

| + | Contact: [[team: | ||

| - | Contact: [[team: | ||

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: