Differences

This shows you the differences between two versions of the page.

| Both sides previous revisionPrevious revisionNext revision | Previous revisionNext revisionBoth sides next revision | ||

| jobs [2014/08/29 09:33] – [Theses and Jobs] ahaidu | jobs [2015/09/08 12:24] – [Theses and Jobs] nyga | ||

|---|---|---|---|

| Line 5: | Line 5: | ||

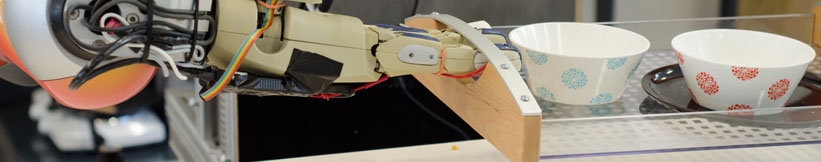

| - | == GPU-based Parallelization of Numerical Optimization Techniques | + | == Kitchen Activity Games in a Realistic Robotic Simulator |

| + | {{ : | ||

| - | In the field of Machine Learning, numerical optimization techniques play a focal role. However, as models grow larger, traditional implementations on single-core CPUs suffer from sequential execution causing | + | Developing new activities and improving |

| Requirements: | Requirements: | ||

| - | | + | * Good programming skills in C/C++ |

| - | | + | * Basic physics/ |

| + | * Gazebo simulator basic tutorials | ||

| - | Contact: [[team:daniel_nyga|Daniel Nyga]] | + | Contact: [[team:andrei_haidu|Andrei Haidu]] |

| - | == Online Learning of Markov Logic Networks for Natural-Language Understanding | + | == Integrating Eye Tracking in the Kitchen Activity Games (BA/MA)== |

| + | {{ : | ||

| - | Markov Logic Networks (MLNs) combine | + | Integrating |

| Requirements: | Requirements: | ||

| - | * Experience | + | * Good programming skills |

| - | * Experience with statistical relational learning (e.g. MLNs) is helpful. | + | * Gazebo simulator basic tutorials |

| - | * Good programming skills in Python. | + | |

| - | Contact: [[team:daniel_nyga|Daniel Nyga]] | + | Contact: [[team:andrei_haidu|Andrei Haidu]] |

| + | == Hand Skeleton Tracking Using Two Leap Motion Devices (BA/MA)== | ||

| + | {{ : | ||

| - | ==HiWi-Position: Knowledge Representation & Language Understanding for Intelligent Robots== | + | Improving the skeletal tracking offered by the [[https:// |

| - | In the context of the European research project RoboHow.Cog [1,2] we | + | The tracked hand can then be used as input for the Kitchen Activity Games framework. |

| - | are investigating methods for combining multimodal sources of knowledge (e.g. video, natural-language recipes or computer games), in order to enable mobile robots to autonomously acquire new high level skills like cooking meals or straightening up rooms. | + | |

| - | The Institute for Artificial Intelligence is hiring a student researcher for the | + | Requirements: |

| - | development and the integration of probabilistic methods | + | * Good programming skills |

| - | This HiWi-Position can serve as a starting point for future Bachelor' | + | Contact: [[team: |

| - | Tasks: | + | == Fluid Simulation in Gazebo |

| - | * Implementation of an interface to the Robot Operating System | + | {{ : |

| - | * Linkage of the knowledge base to the executive of the robot. | + | |

| - | * Support for the scientific staff in extending and integrating components onto the robot platform PR2. | + | |

| - | Requirements: | + | [[http:// |

| - | * Studies in Computer Science | + | |

| - | * Basic skills | + | |

| - | * Optional: basic skills | + | |

| - | * Optional: basic skills in Machine Learning | + | |

| - | * Good programming skills in Python and Java | + | |

| - | + | ||

| - | Hours: 10-20 h/week | + | |

| - | Contact: [[team:daniel_nyga|Daniel Nyga]] | + | Currently there is an [[http:// |

| - | [1] www.robohow.eu\\ | + | The computational method for the fluid simulation is SPH (Smoothed-particle Dynamics), however newer and better methods based on SPH are currently present |

| - | [2] http://www.youtube.com/ | + | and should be implemented (PCISPH/IISPH). |

| + | The interaction between the fluid and the rigid objects is a naive one, the forces and torques are applied only from the particle collisions (not taking into account pressure and other forces). | ||

| - | == Depth-Adaptive Superpixels (BA/MA)== | + | Another topic would be the visualization |

| - | {{ : | + | |

| - | We are currently investigating a new set of sensors (RGB-D-T), which is a combination of a kinect with a thermal image camera. Within this project we want to enhance | + | |

| - | Since the current | + | Here is a [[https:// |

| Requirements: | Requirements: | ||

| - | | + | * Good programming skills in C/C++ |

| - | | + | * Interest in Fluid simulation |

| - | * Experience with CUDA is helpful | + | * Basic physics/ |

| + | * Gazebo simulator and Fluidix basic tutorials | ||

| - | Contact: [[team:jan-hendrik_worch|Jan-Hendrik Worch]] | + | Contact: [[team:andrei_haidu|Andrei Haidu]] |

| - | == Physical Simulation of Humans | + | == Automated sensor calibration toolkit |

| - | {{ : | + | |

| - | For tracking people, the use of particle filters | + | Computer vision is an important part of autonomous robots. |

| - | Requirements: | + | The topic for this thesis is to develop an automated system for calibrating cameras, especially RGB-D cameras like the Kinect v2. |

| - | * Good programming skills in C/C++ | + | |

| - | * Optional: Experience in working with physics libraries such as Bullet | + | |

| - | Contact: [[team:jan-hendrik_worch|Jan-Hendrik Worch]] | + | |

| + | The system should: | ||

| + | * be independent of the camera type | ||

| + | * estimate intrinsic and extrinsic parameters | ||

| + | * calibrate depth images (case of RGB-D) | ||

| + | * integrate capabilities from Halcon [1] | ||

| + | * operate autonomously | ||

| - | == Kitchen Activity Games in a Realistic Robotic Simulator (BA/MA/HiWi)== | + | Requirements: |

| - | {{ : | + | * Good programming skills |

| + | * ROS, OpenCV | ||

| - | Developing new activities and improving the current simulation framework done under the Gazebo robotic simulator. Creating a custom GUI for the game, in order to launch new scenarios, save logs etc. | + | [1] http://www.halcon.de/ |

| - | Requirements: | + | Contact: [[team: |

| + | |||

| + | == On-the-fly 3D CAD model creation (MA)== | ||

| + | |||

| + | Create models during runtime for unknown textured objets based on depth and color information. Track the object and update the model with more detailed information, | ||

| + | |||

| + | Requirements: | ||

| * Good programming skills in C/C++ | * Good programming skills in C/C++ | ||

| - | * Basic physics/ | + | * strong background in computer vision |

| - | * Gazebo simulator basic tutorials | + | * ROS, OpenCV, PCL |

| - | Contact: [[team:andrei_haidu|Andrei Haidu]] | + | Contact: [[team:thiemo_wiedemeyer|Thiemo Wiedemeyer]] |

| - | == Integrating Eye Tracking in the Kitchen Activity Games (BA/MA)== | + | == Simulation of a robots belief state to support perception(MA) == |

| - | {{ : | + | |

| - | Integrating | + | Create a simulation environment that represents |

| - | and logging the gaze of the user during the gameplay. From the information typical activities should be inferred. | + | |

| - | Requirements: | + | Requirements: |

| * Good programming skills in C/C++ | * Good programming skills in C/C++ | ||

| - | * Gazebo | + | |

| + | | ||

| - | Contact: [[team:andrei_haidu|Andrei Haidu]] | + | Contact: [[team:ferenc_balint-benczedi|Ferenc Balint-Benczedi]] |

| - | == Hand Skeleton Tracking Using Two Leap Motion Devices (BA/MA)== | + | == Multi-expert segmentation of cluttered and occluded scenes |

| - | {{ : | + | |

| - | Improving the skeletal tracking offered by the Leap Motion SDK, by using two devices (one tracking vertically the other horizontally) | + | Objects in a human environment are usually found in challenging scenes. They can be stacked upon eachother, touching or occluding, can be found in drawers, cupboards, refrigerators |

| - | The tracked hand can then be used as input for the Kitchen Activity Games framework. | + | Requirements: |

| - | + | ||

| - | Requirements: | + | |

| * Good programming skills in C/C++ | * Good programming skills in C/C++ | ||

| + | * strong background in 3D vision | ||

| + | * basic knowledge of ROS, OpenCV, PCL | ||

| - | Contact: [[team:andrei_haidu|Andrei Haidu]] | + | Contact: [[team:ferenc_balint-benczedi|Ferenc Balint-Benczedi]] |

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: