Differences

This shows you the differences between two versions of the page.

| Both sides previous revisionPrevious revisionNext revision | Previous revisionNext revisionBoth sides next revision | ||

| jobs [2014/08/29 09:33] – [Theses and Jobs] ahaidu | jobs [2015/03/19 13:27] – [Theses and Jobs] raider | ||

|---|---|---|---|

| Line 56: | Line 56: | ||

| [2] http:// | [2] http:// | ||

| - | |||

| - | == Depth-Adaptive Superpixels (BA/MA)== | ||

| - | {{ : | ||

| - | We are currently investigating a new set of sensors (RGB-D-T), which is a combination of a kinect with a thermal image camera. Within this project we want to enhance the Depth-Adaptive Superpixels (DASP) to make use of the thermal sensor data. Depth-Adaptive Superpixels oversegment an image taking into account the depth value of each pixel. | ||

| - | |||

| - | Since the current implementation of DASP is not very performant for high resolution images, there are several options for doing a project in this field like reimplementing DASP using CUDA, investigating how thermal data can be integrated, ... | ||

| - | |||

| - | Requirements: | ||

| - | * Basic knowledge of image processing | ||

| - | * Good programming skills in C/C++. | ||

| - | * Experience with CUDA is helpful | ||

| - | |||

| - | Contact: [[team: | ||

| - | |||

| - | |||

| - | == Physical Simulation of Humans (BA/MA)== | ||

| - | {{ : | ||

| - | |||

| - | For tracking people, the use of particle filters is a common approach. However, the quality of those filters heavily depends on the way particles are spread. In this thesis, a library for the physical simulation of a human model is to be implemented. | ||

| - | |||

| - | Requirements: | ||

| - | * Good programming skills in C/C++ | ||

| - | * Optional: Experience in working with physics libraries such as Bullet | ||

| - | |||

| - | Contact: [[team: | ||

| == Kitchen Activity Games in a Realistic Robotic Simulator (BA/ | == Kitchen Activity Games in a Realistic Robotic Simulator (BA/ | ||

| {{ : | {{ : | ||

| - | Developing new activities and improving the current simulation framework done under the Gazebo robotic simulator. Creating a custom GUI for the game, in order to launch new scenarios, save logs etc. | + | Developing new activities and improving the current simulation framework done under the [[http:// |

| Requirements: | Requirements: | ||

| Line 97: | Line 72: | ||

| {{ : | {{ : | ||

| - | Integrating the eye tracker in the Gazebo based Kitchen Activity Games framework | + | Integrating the eye tracker in the [[http:// |

| - | and logging the gaze of the user during the gameplay. From the information typical activities should be inferred. | + | |

| Requirements: | Requirements: | ||

| Line 109: | Line 83: | ||

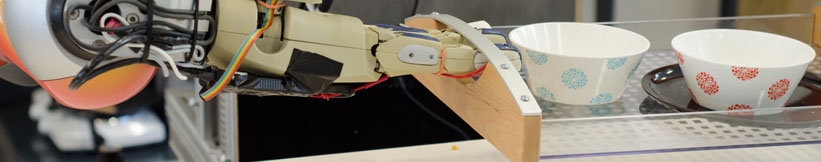

| {{ : | {{ : | ||

| - | Improving the skeletal tracking offered by the Leap Motion SDK, by using two devices (one tracking vertically the other horizontally) and switching between them to the one that has the best current view of the hand. | + | Improving the skeletal tracking offered by the [[https:// |

| The tracked hand can then be used as input for the Kitchen Activity Games framework. | The tracked hand can then be used as input for the Kitchen Activity Games framework. | ||

| Line 117: | Line 91: | ||

| Contact: [[team: | Contact: [[team: | ||

| + | |||

| + | == Fluid Simulation in Gazebo (BA/MA)== | ||

| + | {{ : | ||

| + | |||

| + | [[http:// | ||

| + | |||

| + | Currently there is an [[http:// | ||

| + | |||

| + | The computational method for the fluid simulation is SPH (Smoothed-particle Dynamics), however newer and better methods based on SPH are currently present | ||

| + | and should be implemented (PCISPH/ | ||

| + | |||

| + | The interaction between the fluid and the rigid objects is a naive one, the forces and torques are applied only from the particle collisions (not taking into account pressure and other forces). | ||

| + | |||

| + | Another topic would be the visualization of the fluid, currently is done by rendering every particle. For the rendering engine [[http:// | ||

| + | |||

| + | Here is a [[https:// | ||

| + | |||

| + | Requirements: | ||

| + | * Good programming skills in C/C++ | ||

| + | * Interest in Fluid simulation | ||

| + | * Basic physics/ | ||

| + | * Gazebo simulator and Fluidix basic tutorials | ||

| + | |||

| + | Contact: [[team: | ||

| + | |||

| + | |||

| + | == Automated sensor calibration toolkit (MA)== | ||

| + | |||

| + | Computer vision is an important part of autonomous robots. For robots the image sensors are the main source of information of the surrounding world. Each camera is different, even if they are from the same production line. For computer vision, especially for robots manipulating their environment, | ||

| + | |||

| + | The topic for this thesis is to develop an automated system for calibrating cameras, especially RGB-D cameras like the Kinect v2. | ||

| + | |||

| + | {{ : | ||

| + | The system should: | ||

| + | * be independent of the camera type | ||

| + | * estimate intrinsic and extrinsic parameters | ||

| + | * calibrate depth images (case of RGB-D) | ||

| + | * integrate capabilities from Halcon [1] | ||

| + | * operate autonomously | ||

| + | |||

| + | Requirements: | ||

| + | * Good programming skills in Python and C/C++ | ||

| + | * ROS, OpenCV | ||

| + | |||

| + | [1] http:// | ||

| + | |||

| + | Contact: [[team: | ||

| + | |||

| + | == On-the-fly 3D CAD model creation (MA)== | ||

| + | |||

| + | Create models during runtime for unknown textured objets based on depth and color information. Track the object and update the model with more detailed information, | ||

| + | |||

| + | Requirements: | ||

| + | * Good programming skills in C/C++ | ||

| + | * strong background in computer vision | ||

| + | * ROS, OpenCV, PCL | ||

| + | |||

| + | Contact: [[team: | ||

| + | |||

| + | == Simulation of a robots belief state to support perception(MA) == | ||

| + | |||

| + | Create a simulation environment that represents the robots current belief state and can be updated frequently. Use off-screen rendering to investigate the affordances these objects possess, in order to support segmentation, | ||

| + | |||

| + | Requirements: | ||

| + | * Good programming skills in C/C++ | ||

| + | * strong background in computer vision | ||

| + | * Gazebo, OpenCV, PCL | ||

| + | |||

| + | Contact: [[team: | ||

| + | |||

| + | == Multi-expert segmentation of cluttered and occluded scenes == | ||

| + | |||

| + | Objects in a human environment are usually found in challenging scenes. They can be stacked upon eachother, touching or occluding, can be found in drawers, cupboards, refrigerators and so on. A personal robot assistant in order to execute a task, needs to detect these objects and recognize them. In this thesis a multi-modal approach to interpreting cluttered scenes is going to be investigated, | ||

| + | |||

| + | Requirements: | ||

| + | * Good programming skills in C/C++ | ||

| + | * strong background in 3D vision | ||

| + | * basic knowledge of ROS, OpenCV, PCL | ||

| + | |||

| + | Contact: [[team: | ||

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: