Differences

This shows you the differences between two versions of the page.

| Both sides previous revisionPrevious revisionNext revision | Previous revisionNext revisionBoth sides next revision | ||

| robocup19 [2018/11/29 16:08] – s_7teji4 | robocup19 [2019/01/09 08:31] – s_7teji4 | ||

|---|---|---|---|

| Line 3: | Line 3: | ||

| === Motivation and Goal=== | === Motivation and Goal=== | ||

| < | < | ||

| - | < | + | < |

| - | | + | < |

| <ul style=" | <ul style=" | ||

| < | < | ||

| Line 13: | Line 13: | ||

| < | < | ||

| </ul> | </ul> | ||

| - | </h5> | ||

| </ | </ | ||

| </ | </ | ||

| === Team === | === Team === | ||

| - | {{ : | + | {{ : |

| < | < | ||

| + | <br> | ||

| <p align=" | <p align=" | ||

| Alina Hawkin <br> hawkin[at]uni-bremen.de | Alina Hawkin <br> hawkin[at]uni-bremen.de | ||

| Line 37: | Line 37: | ||

| Vanessa Hassouna <br> hassouna[at]uni-bremen.de | Vanessa Hassouna <br> hassouna[at]uni-bremen.de | ||

| </p> | </p> | ||

| - | </ | + | < |

| - | === Methodology and implementation=== | + | < |

| + | </ | ||

| + | ===Methodology and implementation=== | ||

| < | < | ||

| <div display=" | <div display=" | ||

| Line 44: | Line 46: | ||

| < | < | ||

| In order to perform the task, the robot needs to be able to autonomously and safely navigate within the world. This includes the generation of a representation of its surroundings as a 2D map, so that a path can be calculated to navigate the robot from point A to point B. Collision avoidance of objects which are not accounted for within the map, e.g. movable objects like chairs or people who cross the path of the robot, have to be accounted for and reacted to accordingly as well. The combination of all these aspects allows for a safe navigation of the robot within the environment. | In order to perform the task, the robot needs to be able to autonomously and safely navigate within the world. This includes the generation of a representation of its surroundings as a 2D map, so that a path can be calculated to navigate the robot from point A to point B. Collision avoidance of objects which are not accounted for within the map, e.g. movable objects like chairs or people who cross the path of the robot, have to be accounted for and reacted to accordingly as well. The combination of all these aspects allows for a safe navigation of the robot within the environment. | ||

| + | <iframe width=" | ||

| </ | </ | ||

| < | < | ||

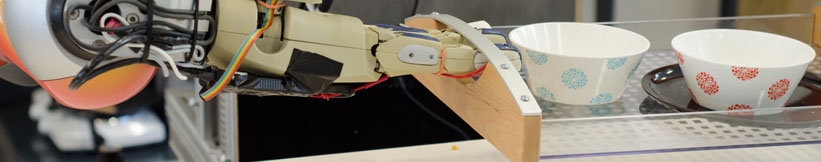

| Manipulation is the control of movements of joints and links of the robot. This allows the robot to make correct movements and accomplish orders coming from the upper layer. Depending on the values received the robot can for example enter objects or deposit them. | Manipulation is the control of movements of joints and links of the robot. This allows the robot to make correct movements and accomplish orders coming from the upper layer. Depending on the values received the robot can for example enter objects or deposit them. | ||

| Giskardpy is an important library in the cacul of movements. The implementation of the manipulation is done thanks to the python language, ROS, and the iai_kinematic_sim and iai_hsr_sim libraries in addition to Giskardpy. | Giskardpy is an important library in the cacul of movements. The implementation of the manipulation is done thanks to the python language, ROS, and the iai_kinematic_sim and iai_hsr_sim libraries in addition to Giskardpy. | ||

| + | </ | ||

| </p> | </p> | ||

| < | < | ||

| Planning connects perception, knowledge, manipulation and navigation by creating plans for the robot activities. Here, we develop generic strategies for the robot so that he can decide, which action should be executed in which situation and in which order. One task of planning is the failure handling and providing of recovery strategies. | Planning connects perception, knowledge, manipulation and navigation by creating plans for the robot activities. Here, we develop generic strategies for the robot so that he can decide, which action should be executed in which situation and in which order. One task of planning is the failure handling and providing of recovery strategies. | ||

| + | </p> | ||

| + | < | ||

| + | The perception module has the task to process the visual data received by the robot' | ||

| + | </ | ||

| </p> | </p> | ||

| </ | </ | ||

| Line 56: | Line 64: | ||

| === Link to open source and research === | === Link to open source and research === | ||

| + | < | ||

| + | < | ||

| + | < | ||

| + | < | ||

| + | < | ||

| + | </ | ||

| + | </ | ||

Prof. Dr. hc. Michael Beetz PhD

Head of Institute

Contact via

Andrea Cowley

assistant to Prof. Beetz

ai-office@cs.uni-bremen.de

Discover our VRB for innovative and interactive research

Memberships and associations: